Latest posts

LLM_log #004 From Scratch: Working with Text Data — Embeddings for LLMs

Highlights: Before we can build or train a Large Language Model, we need to solve a fundamental problem — LLMs cannot process raw text. In today’s post, we’ll walk through the complete pipeline that converts human-readable text into numerical vectors that a neural network can work with. We’ll cover tokenization, vocabulary building, byte pair encoding, sliding window sampling, and how token and positional embeddings come together to form the final input to a GPT-like transformer.…

Read more

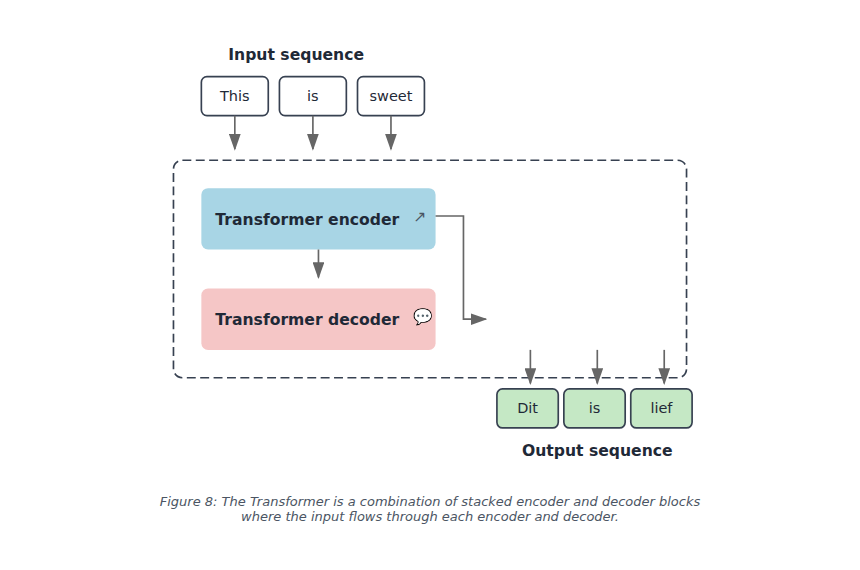

LLM_log #003 Understanding Large Language Models – Transformers illustrative explanation

🚀 Understanding Large Language Models: A Complete Visual Guide 🎯 What You’ll Learn Large Language Models like GPT-4, Claude, and Llama have transformed how we interact with AI, but understanding what actually happens when you type “The cat sat on the” and the model predicts “mat” can feel like opening a black box. In this comprehensive guide, we’ll demystify the entire process by following a single example through every step of a Transformer’s architecture. You’ll…

Read more

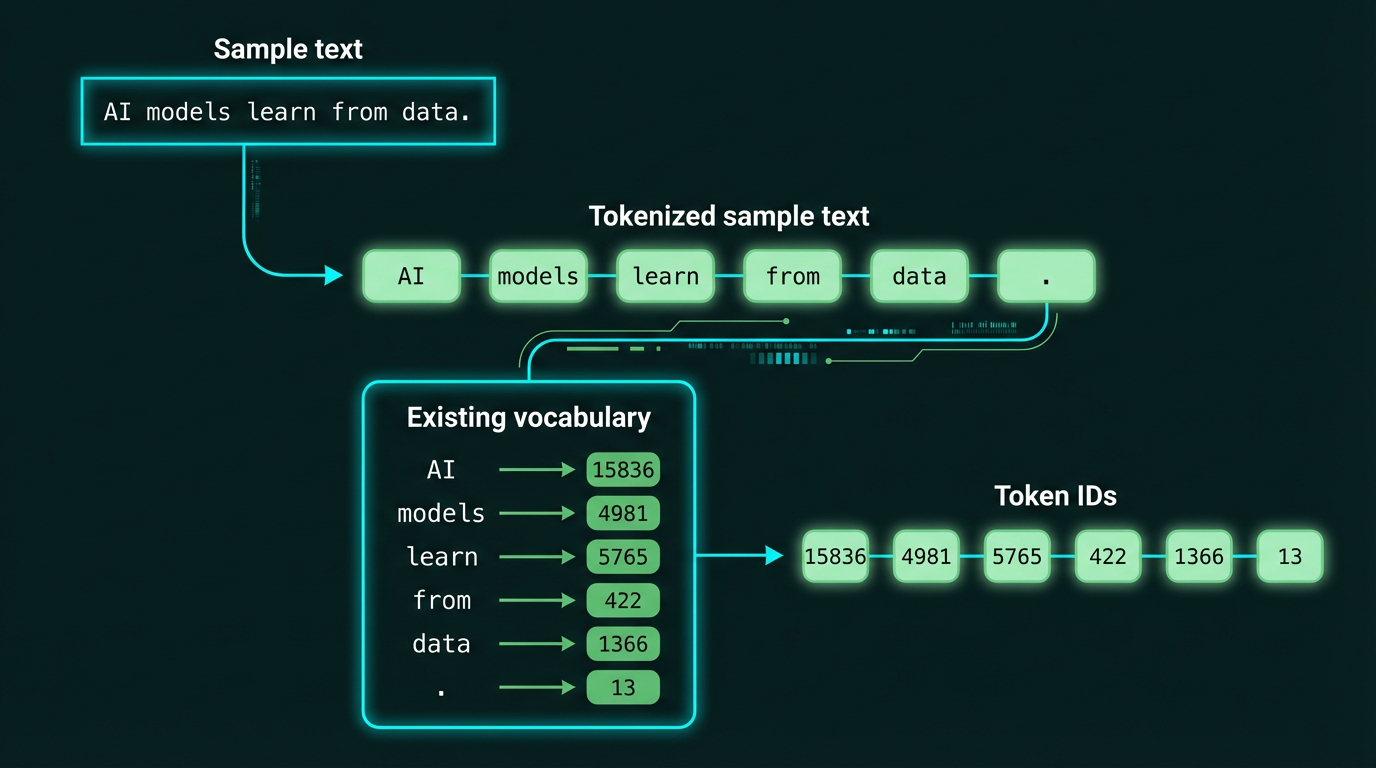

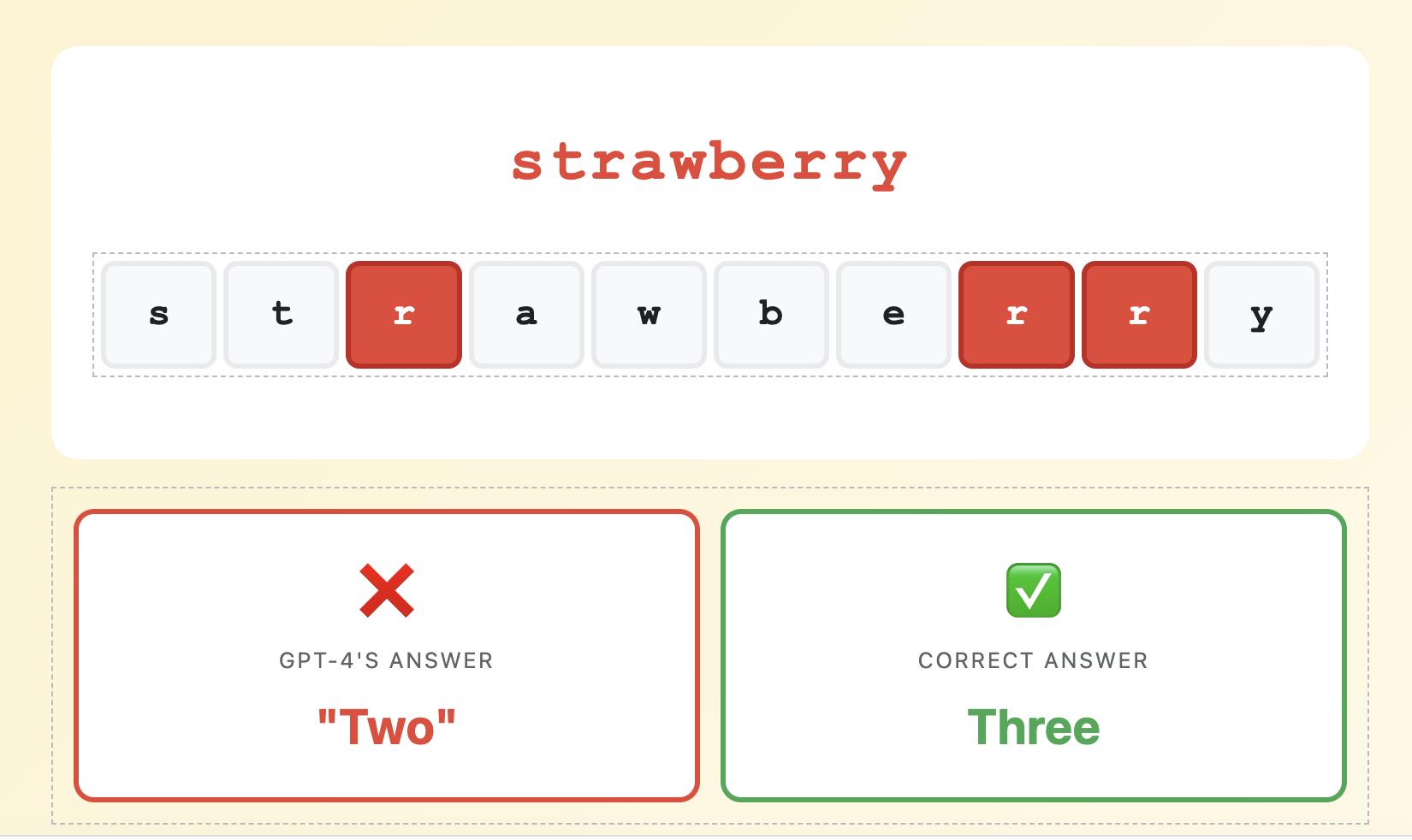

LLM_log #002: Tokenization in Large Language Modelling

Understanding Tokenization in Large Language Models Why GPT-4 Can’t Count the Letters in “Strawberry” VLADIMIR MATIC, PhD – DataHacker.rs – January 2025 🍓 “How many letter ‘r’s are in the word strawberry?” strawberry s t r a w b e r r y ❌ GPT-4’s Answer “Two” ✅ Correct Answer Three In early 2024, this simple question stumped GPT-4. A billion-dollar AI model failed at counting letters in a 10-letter word. Why? The answer lies…

Read more

LLM_log #001: Understanding Large Language Models: From Word Counting to Neural Networks

Part 1: The Evolution of Text Representation DataHacker.rs | January 2026 🚀 The AI Revolution: How We Got Here The period from 2012 to today marked a fundamental transformation in artificial intelligence. Deep neural networks enabled systems that can understand and generate human language with unprecedented accuracy. Figure 0: From word embeddings to reasoning-capable AI systems – the complete LLM timeline The ChatGPT Moment November 2022 brought ChatGPT – an application that: Reached 1 million…

Read more

dH #027: A Unified Framework for Deep Learning Architectures: From Sequences to Graphs

🎯 What You’ll Learn In this comprehensive guide, we’ll explore a unified framework for understanding deep learning architectures across different data types. You’ll learn how to design models based on fundamental principles of invariance and equivariance, understand the spectrum from domain-specific to general-purpose approaches, master the building blocks of temporal sequence models including RNNs and Transformers, and discover how spatial convolution models and graph neural networks all fit into one coherent paradigm. By the end,…

Read more

dH #025 Image Segmentation Fundamentals: From Point Detection to Advanced Edge Linking

Mastering Image Segmentation Fundamentals: From Point Detection to Advanced Edge Linking 🎯 What You’ll Learn In this comprehensive guide, we’ll explore the fundamental techniques of image segmentation and edge detection that form the backbone of modern computer vision. You’ll understand the mathematical foundations of derivatives in image processing, master point and line detection methods using second-order derivatives, learn how to implement sophisticated edge detection algorithms including the Canny detector, and discover how to link edges…

Read more

dH #026 Understanding Transformers with Claude – Visualized and Intuitive – >>!!!READ THIS!!! <<

🤖 Understanding Transformers: A Progressive Q&A Journey From basic embeddings to self-attention to generation – built step by step through questions Prerequisites: Basic understanding of matrix multiplication Reading time: 20-30 minutes What you’ll learn: How transformers work from first principles 📚 What is a Transformer? Architecture: Neural network for processing sequences (text, images, etc.) Key Innovation: Self-attention mechanism (all words look at all other words) Parallel Processing: Unlike RNNs, processes entire sequence simultaneously Used in:…

Read more

dH #020: Introduction to Retrieval Augmented Language Modeling

Highlight: Retrieval-augmented language modeling represents one of the most exciting frontiers in AI, combining the parametric knowledge of Large Language Models with the dynamic power of external knowledge retrieval. You’ll discover how groundbreaking systems like RETRO, RAG, and modern frameworks like RePlug are revolutionizing how AI accesses and utilizes information, moving beyond the limitations of static training data. Let’s begin! Tutorial Overview: 1. Introduction to Retrieval Augmented Language Modeling Overview Hello and welcome back! In…

Read more

Featured posts

#004 How to smooth and sharpen an image in OpenCV?

Highlight: In our previous posts we mastered some basic image processing techniques and now we are ready to move on to more advanced concepts. In this post, we are going to explain how to blur and sharpen images. When we want to blur or sharpen our image, we need to apply a linear filter. You will learn several types of filters that we often use in the image processing In addition, we will also show…

Read more

#000 How to access and edit pixel values in OpenCV with Python?

Highlight: Welcome to another datahacker.rs post series! We are going to talk about digital image processing using OpenCV in Python. In this series, you will be introduced to the basic concepts of OpenCV and you will be able to start writing your first scripts in Python. Our first post will provide you with an introduction to the OpenCV library and some basic concepts that are necessary for building your computer vision applications. You will learn…

Read more

#006 Linear Algebra – Inner or Dot Product of two Vectors

Highlight: In this post we will review one of the fundamental operators in Linear Algebra. It is known as a Dot product or an Inner product of two vectors. Most of you are already familiar with this operator, and actually it’s quite easy to explain. And yet, we will give some additional insights as well as some basic info how to use it in Python. Tutorial Overview: Dot product :: Definition and properties Linear functions…

Read more

#008 Linear Algebra – Eigenvectors and Eigenvalues

Highlight: In this post we will talk about eigenvalues and eigenvectors. This concept proved to be quite puzzling to comprehend for many machine learning and linear algebra practitioners. However, with a very good and solid introduction that we provided in our previous posts we will be able to explain eigenvalues and eigenvectors and enable very good visual interpretation and intuition of these topics. We give some Python recipes as well. Tutorial Overview: Intuition about eigenvectors…

Read more

#011 TF How to improve the model performance with Data Augmentation?

Highlights: In this post we will show the benefits of data augmentation techniques as a way to improve performance of a model. This method will be very beneficial when we do not have enough data at our disposal. Tutorial Overview: Training without data augmentation What is data augmentation? Training with data augmentation Visualization 1. Training without data augmentation A familiar question is “why should we use data augmentation?”. So, let’s see the answer. In order…

Read more