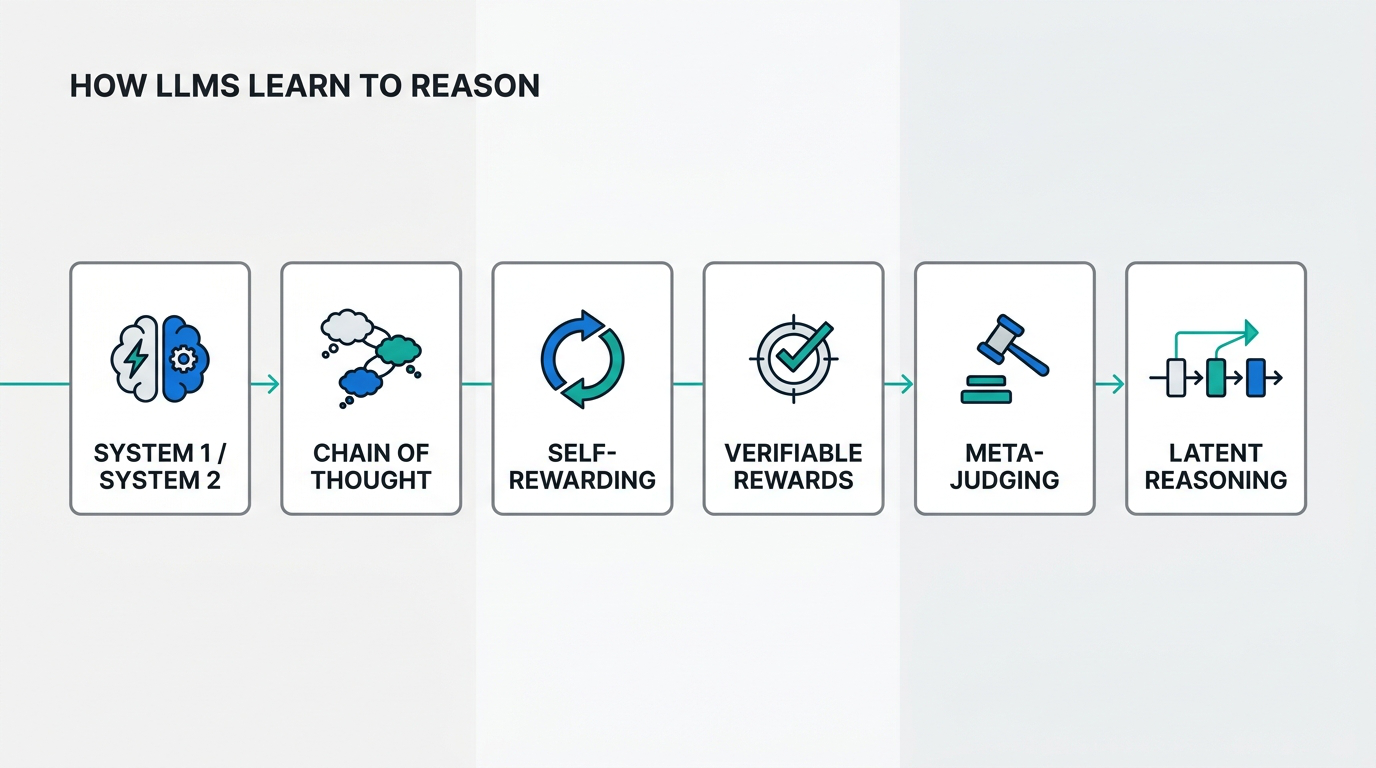

Highlights: Jason Weston traces the arc from early neural language models to self-improving LLMs that generate their own training data and evaluate their own reasoning System 1 vs System 2: fixed-compute pattern-matching vs deliberate multi-step reasoning — and why the same LLM implements both Chain-of-Thought prompting: adding “Let’s think step by step” jumps GSM8K accuracy from ~10% to 40–50%; few-shot CoT hits 90%+ on MultiArith CoVe + S2A: Chain-of-Verification reduces hallucinations 3× on knowledge list…

Read more

Master Data Science

Latest posts

LLM_log #021: How LLMs Learn to Reason — From Chain-of-Thought to Self-Rewarding and Meta-Judges

LLM_log #020: Language Agents — Memory, Reasoning, and Planning

Highlights: Yu Su’s guest lecture in the UC Berkeley CS294-280 course argues language agents are not “LLM + tools” but a new evolutionary stage of machine intelligence. We walk through the agent-first framing and three concrete research pillars — long-term memory (HippoRAG), implicit reasoning (Grokked Transformers), and model-based planning (WebDreamer) — that map directly to classical AI problems re-examined through the lens of LLMs. Agent-first framing: token generation is itself an action; the inner monologue…

Read more

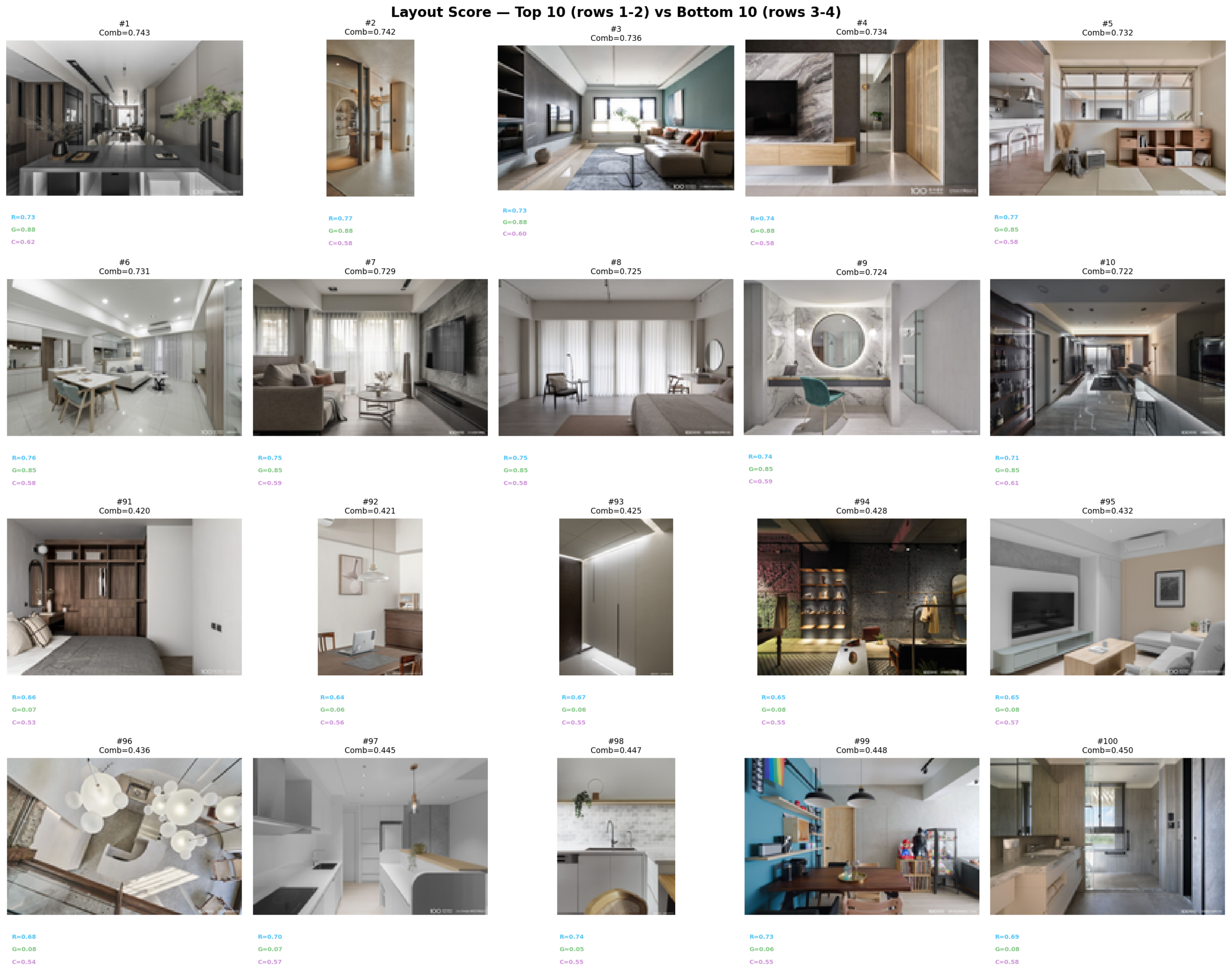

LLM_log #019: Layout Scoring — Does Furniture Placement Follow the Rule of Thirds?

Highlights: Can we measure spatial composition in living room photographs? We score 100 interior images using saliency-based rule-of-thirds alignment, Gemini Vision layout ratings, and CLIP composition prompts — then cross-correlate with color scores from #018 to find rooms that nail both color and layout. Method 1 — Rule of Thirds + Balance: gradient saliency → Gaussian-weighted power point alignment + visual balance index Method 2 — Gemini Vision Layout: send each image to Gemini 2.5…

Read more

LLM_log #018: Color Harmony Ranking — Three Methods, 500 Living Rooms

Highlights: Can three completely independent methods agree on which living room has the best colors? We rank 500 interior images using Cohen-Or harmonic templates, Hasler-Süsstrunk colorfulness, and CLIP IQA with 44 color-focused prompts — then measure whether they correlate at all. Method 1 — Cohen-Or: K-means palette → saturation-weighted hue histogram → sweep 7 harmonic templates × 36 rotations → H/T/S composite Method 2 — Hasler-Süsstrunk: opponent channels (rg, yb) → colorfulness + 4×4 spatial…

Read more

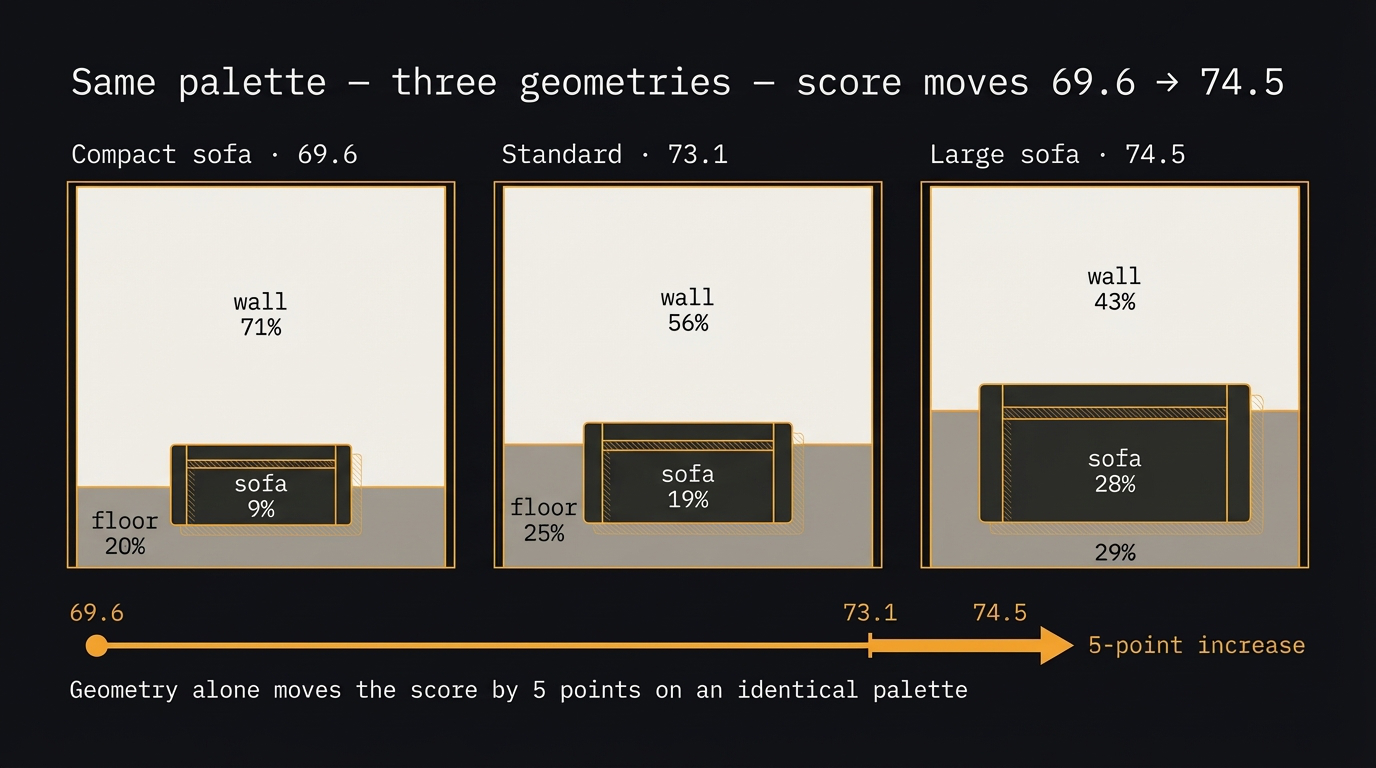

LLM_log #017: Scoring Color Harmony — From Two Squares to a Room

Highlights: Can you score color quality algorithmically? Not as taste — as math. This post builds a scoring system from first principles: two adjacent color squares, then triplets, then a real room with three spatial regions. We walk through every formula with brand and flag examples you already know, then prove that geometry alone can move the score by five points on an identical palette. Four pair scoring dimensions: contrast (WCAG luminance), harmony (hue peaks),…

Read more

LLM_log #016: RGB is for Screens. Lab is for Humans — Color Scoring for Living Room Images

Highlights: Every computer vision pipeline that touches color starts with the same mistake: using RGB. RGB is built for screens, not for human perception. In this post we build a complete color scoring system for living room images — from the right color space (Lab), through palette extraction (K-means), to a two-color harmony scorer tested on 10 global brand palettes. We discover why luxury brands deliberately score low, and what that means for your model.…

Read more

LLM_log #015: Fine-Tuning LLMs — Teach a 3B Model to Call Functions with QLoRA + Unsloth on Free Colab T4

Highlights: Every modern LLM agent — from ChatGPT plugins to Claude tools — relies on a single learned skill: outputting a structured JSON function call instead of free text. In this post we teach that skill to a 3-billion parameter model using QLoRA on a free Google Colab T4. We start from the fundamentals — why fine-tuning, when LoRA, how quantization works — then build the full training pipeline from scratch. By the end, your…

Read more

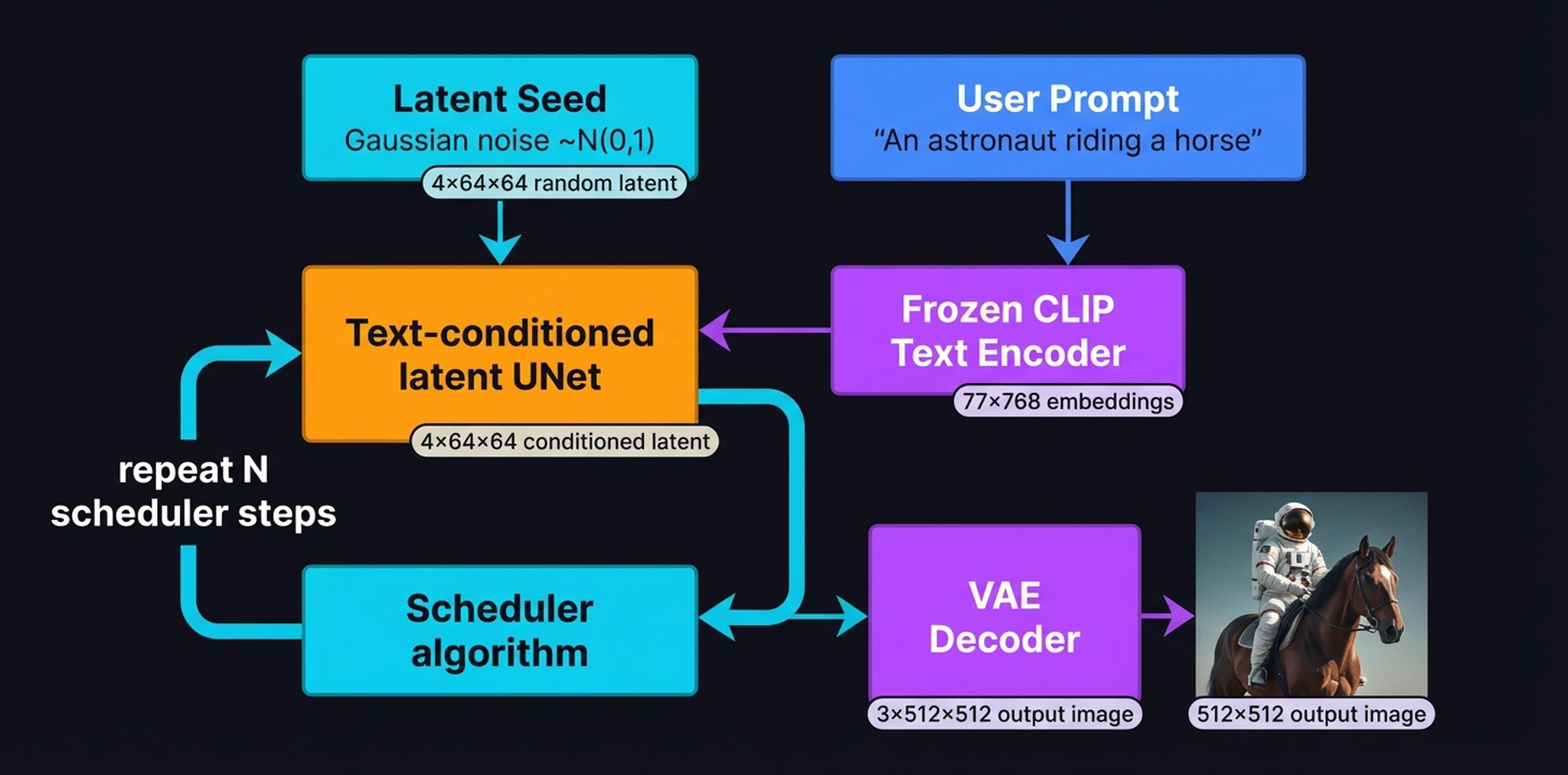

LLM_log #014: Stable Diffusion & Conditional Latent Diffusion — From VAE Compression to Cross-Attention Conditioning

Highlights: Stable Diffusion doesn’t paint an image in one shot — it sculpts one from static, guided by your words. In this post we disassemble the entire machine. We start with the VAE that compresses pixels into a tractable latent space, walk through the forward and reverse diffusion processes, open up the UNet to see how cross-attention physically connects text tokens to spatial regions, and finish with the complete Latent Diffusion architecture diagram that ties…

Read more

LLM_log #013: Latent Space — From AutoEncoders to the Engine Inside Stable Diffusion

Highlights: Every time you use Stable Diffusion, DALL-E, or Sora, the model never touches a single pixel during its main computation. It works entirely inside a compressed, structured space of floating-point numbers — a latent space learned by a VAE. In this post we build that space from scratch. We start from the simplest possible compression — an AutoEncoder on MNIST digits — understand why it fails at generation, fix it with the VAE’s probabilistic…

Read more

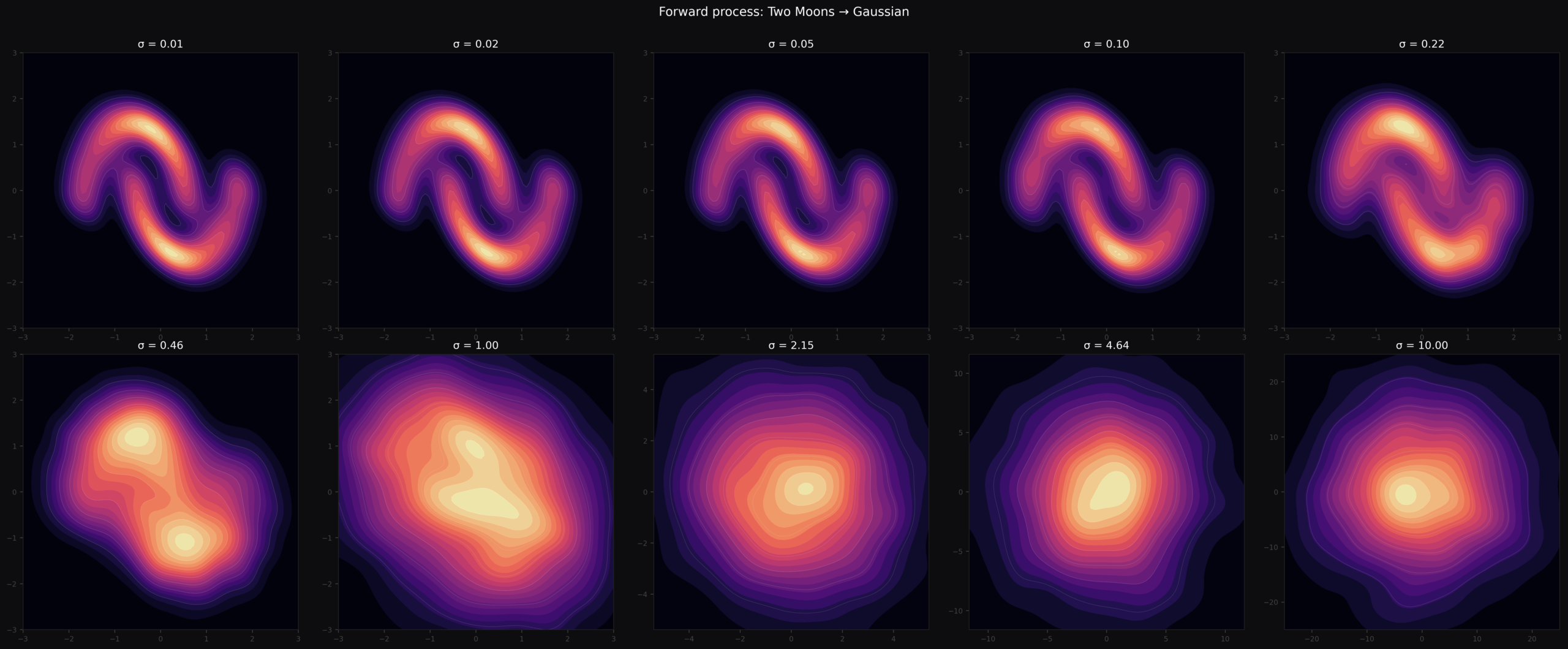

LLM_log #012: Introduction to Diffusion Models — From Noise to Geometry to Sampling

Highlights: In this post we build a complete understanding of diffusion models from the ground up — what they are, how images are represented, how the network is trained, what it geometrically learns, and finally how we turn that geometry into samples using DDIM and DDPM. Every formula is accompanied by concrete numbers you can verify by hand. So let’s begin! Tutorial Overview: What Are Diffusion Models? How Images Are Represented The Denoiser Network Noise…

Read more

LLM_log #011: Diffusion Models — From Noise to Wolves, Training from Scratch

In this post we build a complete diffusion model from scratch — training a UNet on a custom dataset, implementing the full DDPM pipeline, and understanding the math that makes iterative denoising work. We cover noise schedules, the reparameterization trick, FID evaluation, and three diffusion objectives (ε, x₀, v). By the end you’ll have generated novel images from pure Gaussian noise, and understand why diffusion models overtook GANs as the dominant paradigm for image generation.…

Read more

LLM_log #010 Understanding Diffusion Models Through 1D Experiments — From DDPM to Manifold Compactness

Highlights: We implement a complete DDPM from scratch on 1D sine waves — same math as image diffusion, but every intermediate state is plottable. We track 100 parallel trajectories, measure when the model “commits” to a specific sample, then design a controlled experiment that reveals manifold compactness as the key factor determining whether diffusion succeeds or fails. So let’s begin! Tutorial Overview: Why 1D? The Dataset Forward Process Model and Training Generating from Noise What…

Read more

Featured posts

#004 How to smooth and sharpen an image in OpenCV?

Highlight: In our previous posts we mastered some basic image processing techniques and now we are ready to move on to more advanced concepts. In this post, we are going to explain how to blur and sharpen images. When we want to blur or sharpen our image, we need to apply a linear filter. You will learn several types of filters that we often use in the image processing In addition, we will also show…

Read more

#000 How to access and edit pixel values in OpenCV with Python?

Highlight: Welcome to another datahacker.rs post series! We are going to talk about digital image processing using OpenCV in Python. In this series, you will be introduced to the basic concepts of OpenCV and you will be able to start writing your first scripts in Python. Our first post will provide you with an introduction to the OpenCV library and some basic concepts that are necessary for building your computer vision applications. You will learn…

Read more

#006 Linear Algebra – Inner or Dot Product of two Vectors

Highlight: In this post we will review one of the fundamental operators in Linear Algebra. It is known as a Dot product or an Inner product of two vectors. Most of you are already familiar with this operator, and actually it’s quite easy to explain. And yet, we will give some additional insights as well as some basic info how to use it in Python. Tutorial Overview: Dot product :: Definition and properties Linear functions…

Read more

#008 Linear Algebra – Eigenvectors and Eigenvalues

Highlight: In this post we will talk about eigenvalues and eigenvectors. This concept proved to be quite puzzling to comprehend for many machine learning and linear algebra practitioners. However, with a very good and solid introduction that we provided in our previous posts we will be able to explain eigenvalues and eigenvectors and enable very good visual interpretation and intuition of these topics. We give some Python recipes as well. Tutorial Overview: Intuition about eigenvectors…

Read more

#011 TF How to improve the model performance with Data Augmentation?

Highlights: In this post we will show the benefits of data augmentation techniques as a way to improve performance of a model. This method will be very beneficial when we do not have enough data at our disposal. Tutorial Overview: Training without data augmentation What is data augmentation? Training with data augmentation Visualization 1. Training without data augmentation A familiar question is “why should we use data augmentation?”. So, let’s see the answer. In order…

Read more

© 2026 Master Data Science. Built using WordPress and the Mesmerize Theme