#001 TF 2.0 An Introduction to TensorFlow 2.0

What is TensorFlow 2.0?

TensorFlow is an open-source library for numerical computations built by Google Brain team. TensorFlow is based on the data flow graphs. Moreover, it actually allows developers to create data flow graphs—structures that describe how data moves through a graph, or a series of processing nodes. Each node in the graph represents a mathematical operation, and each connection or edge between nodes is a multidimensional data array or a tensor. If we want to make a more general definition of TensorFlow we can say that it is a software framework for numerical computations based on data-flow graphs.

TensorFlow is unique in its ability to perform partial subgraph computation. This feature specifically allows the partitioning of a neural network so that a distributed training may be possible. Hence, a subgraph computation enables TensorFlow to support Model Parallelism.

TensorFlow is cross-platform. It runs on: GPUs and CPUs—including mobile and embedded platforms—and even tensor processing units (TPU-s), which are specialized hardware to do tensor math.

TensorFlow is a Python-friendly open source library for numerical computation that makes machine learning faster and easier

TensorFlow is both flexible and scalable, allowing users to streamline from research into production and that is why it is a commonly chosen framework.

It is not just a software library, but a suite of software that includes TensorFlow, TensorBoard, and Tensor Serving. To make the most out of TensorFlow, we should know how to use all of the above in conjunction with one another.

As we mentioned, TensorFlow performs numerical computations in the form of a Dataflow graph. At a high level, TensorFlow is a Python library that allows users to express arbitrary computation as a graph of data flows. Data in TensorFlow are represented as tensors, which are multidimensional arrays and before we go into a deeper explanation of what is a Dataflow graph, let’s explain what is a Tensor.

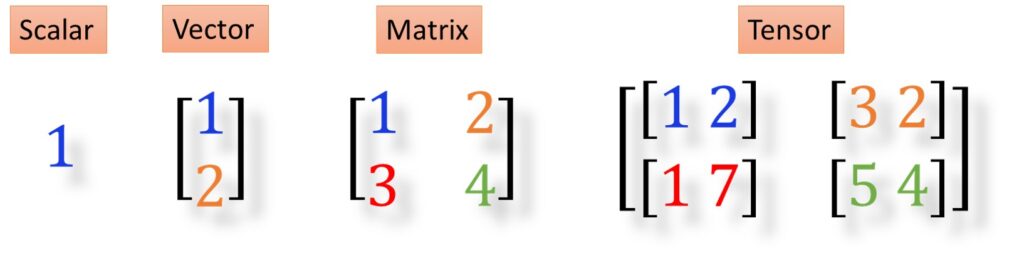

What is a Tensor?

A Tensor is an n-dimensional collection of data, identified by rank, shape, and type. A scalar value is a tensor of rank 0 and thus has a shape of [1]. A vector or a one-dimensional array is a tensor of rank 1 and has a shape of [columns] and [1 row]. A matrix or a two-dimensional array is a tensor of rank 2 and has a shape of [rows, columns]. A three-dimensional array would be a tensor of rank 3, and in the same manner, an n-dimensional array would be a tensor of rank n.

What is a Computation graph?

TensorFlow provides a utility called TensorBoard. One of TensorBoard’s many capabilities is the visualization of a computation graph.

TensorFlow computation graphs are powerful, and yet can be quite complex. In TensorFlow, a computation is approached as a dataflow graph as we can see in the following picture. The graph visualization can help us understand the processing structure and can be used for an easier debugging. Nodes in this graph represent mathematical operations, whereas edges represent data that is passed from one node to another.

TensorFlow 1.x computations define a graph that has no numerical values until it is evaluated. However, in TensorFlow 2.x, computations are executed as in standard Python code. This immediate execution is called an eager execution and it is the default option in TensorFlow 2.x.

What is the core of TensorFlow?

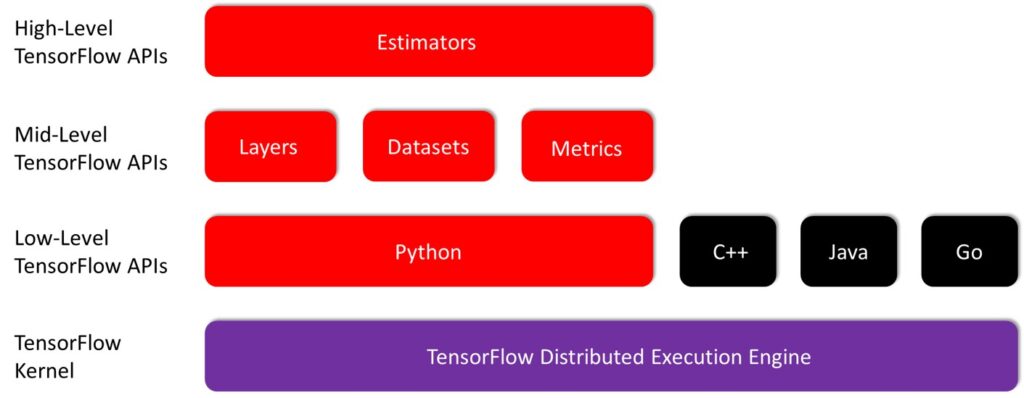

The core of TensorFlow is written in C++. It has two primary high-level frontend languages and interfaces for expressing and executing the computation graphs. Those are in Python, used by most researchers and data scientists and in C++, which is useful for the efficient execution in embedded systems.

TensorFlow can run on multiple CPUs and GPUs. It is also possible to employ it on 64-bit Linux, macOS, Windows, and mobile computing platforms including Android and iOS.

Why is TensorFlow so flexible?

Key enablers of TensorFlow’s flexibility for data scientists and researchers are high-level abstraction libraries. Abstraction libraries such as Keras and TF-Slim offer simplified high-level access to the lower-level library, helping to streamline the construction of the dataflow graphs, to train them, and to enable inference execution.

TesnorFlow has Python, C, C++, Java, Javascript, Go, Rust, Julia, C#, R frontends. The Layers APIs provides a simpler interface for commonly used layers in deep learning models. On top of that sit higher-level APIs, including Keras and the Estimator API, which makes training and evaluating distributed models easier.

Let’s now see the simplest piece of code in Tensorflow.

Now we are going to make a simple code in TensorFlow 2.x. With this code, we will just print Hello world!

While running a model in TensorFlow 1.x is usually done in two parts: Building the computational graph and Running the graph in a TensorFlow session, running a model in TensorFlow 2.x is done eagerly, or to say it differently, immediately.

In the next post we will learn about TensorFlow data model elements.