#013 Machine Learning – K-means clustering

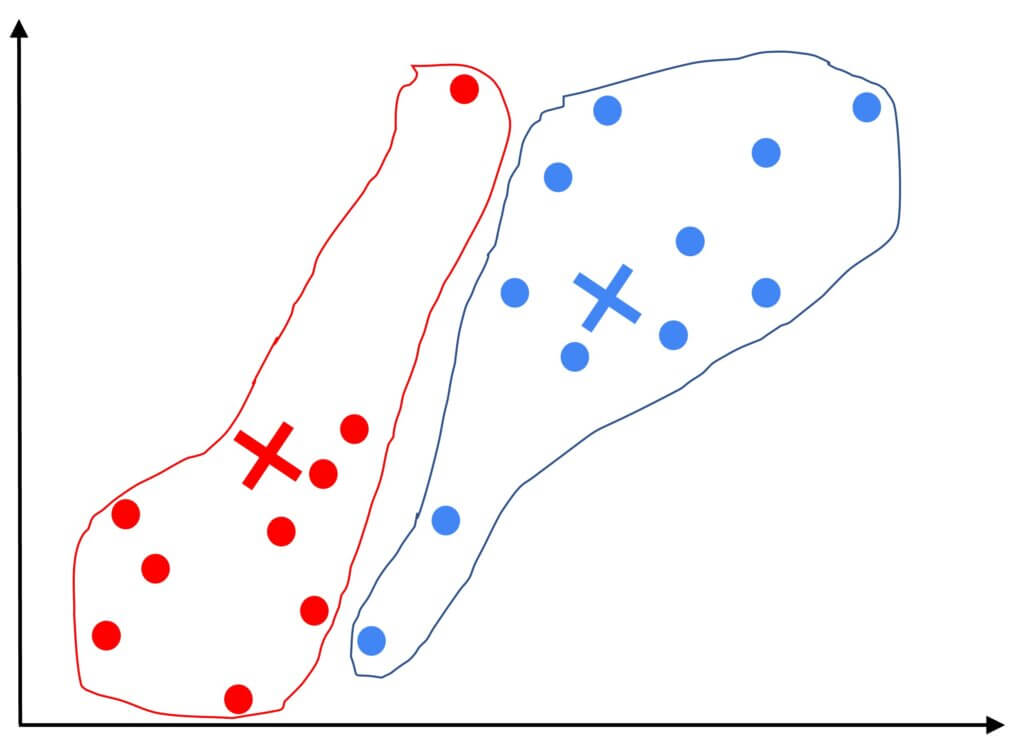

Highlights: Hello and welcome. In the previous posts, we talked in great detail about supervised learning. In this post, we’ll talk about unsupervised learning. In particular, you’ll learn about clustering algorithms, which is a way of grouping data into clusters. In particular, we will talk about one of the most popular clustering algorithms called k-means clustering. Tutorial overview: What is clustering? K-means algorithm K-means algorithm in Python 1. What is clustering? A clustering algorithm looks…

Read more